Sometime back in 2011 or 2012 (my memory is hazy), I read a headline saying, “Google’s Search Quality Rater Guidelines Leaked.” Maybe it was this Search Engine Roundtable article. At the time, I had no idea there was a quality rater program, and reading their stolen handbook was exciting, like I was getting an exclusive behind-the-scenes look into their processes.

This excitement didn’t last long. The Search Quality Rater Guidelines (SQRG) is a dry document that’s 176 pages long. Also, Google started releasing these guidelines to the public in 2014, so it’s not really exclusive any longer.

Despite this, SEOs should be reading it and keeping up with new editions. Although it doesn’t point to any specific ranking factors or algorithms, it does tell us what types of problems Google is trying to solve right now.

What are Google’s Search Quality Rater Guidelines?

The Search Quality Rater Guidelines are a handbook for Google’s roughly 16,000 contractors from around the world who review live and experimental search results. These Quality Raters score result pages according to page quality in terms of E-E-A-T and how well it meets the needs of the user’s query.

The guidelines are publicly available in PDF form, and Google recently published an overview document that summarizes the SQRG.

The SQRG is written for regular people who have signed up for the Quality Rater Program as a part-time job, not digital marketers. It contains instructions for researching the websites and authors for the rated pages, examples of how to score various pages, and guidance for specific types of content.

The Search Quality Rater Program

How does Google know if their algorithm updates will improve search results before they go live? Google uses a multi-step process to make any improvements, and the Quality Rater program plays a key role in evaluating potential updates:

- Ideation – Google engineers will comb through data to find areas of Search that need improvement and ideate systems and features that will address them.

- Development – Other engineers will research technical solutions and code systems to implement the change in search results.

- Quality Raters – Search results generated by the proposed algorithm update will be shown to the roughly 16,000 Quality Raters along with live search results. The raters will score both without knowing which results are experimental. If the experimental results get roughly as good or better scores than live search results, then the proposed algorithm update can graduate to live user testing.

- Live User Testing – Google will randomly show users results generated by the proposed algorithm update. If real users respond well to the experimental change, then it will be incorporated into Google Search for all users.

Basically, the Quality Rater Program is a quality assurance process for potential changes to Google’s Search algorithm. If Quality Raters don’t find that an experimental change improves results, the experiment goes back to Google engineers to work on it again. This allows Google to see if real people agree with how their algorithm interprets pages.

Quality Rater Scores Do Not Affect Live Rankings

There have been a lot of rumors over the years that Google uses the Search Quality Rater Program to demote low-scoring pages. However, Google has stated many times that the scores raters assign to the pages they review aren’t used in live rankings. It just isn’t possible for Google to use this program to change live results at scale.

Another argument against rumors is that while 16,000 Quality Raters can review a lot of web pages, they can’t review them all. Google states that they have hundreds of billions of pages in their index. Assigning human reviewers to all of them isn’t possible, so it’s really the work of machines to attempt to review so much content.

Overview of Google’s Search Quality Guidelines

Google updates the SQRG about once a year. The latest update was in December 2022, and it adjusted the E-A-T formula a little by adding another E: Experience.

Overall, the latest changes are consistent with how Google usually updates them. They want to clarify how Quality Raters should research and interpret search results to get better-quality data. As Google’s systems advance in understanding content, they want raters to dig deeper and think harder to adequately check their work.

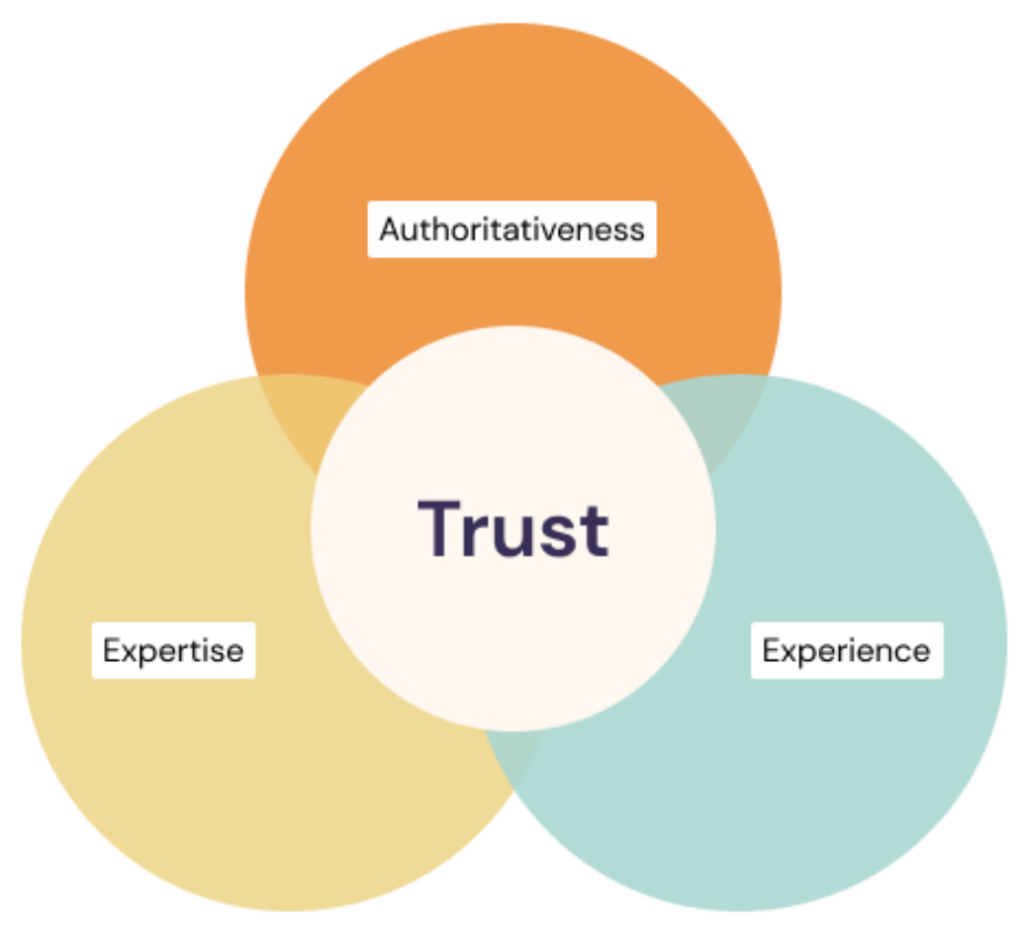

E-E-A-T

The overview we gave in our previous blog post, What Is Google’s E-E-A-T & Why Is It Important for SEO?, still matches what Google wants raters to understand about Expertise, Authoritativeness, and Trustworthiness.

The major change is how Google frames Trustworthniness as dependent on the rater’s interpretation of Expertise, Authority, and the new one, Experience.

Google’s guidance is to look at the page being reviewed and see if it has relevant trust factors. For example, online stores should have a secure payment system, visible customer service contact information, and a reputation for good customer service. Product reviews should appear to be honest and written to help the user make an informed buying decision instead of just pitching the product.

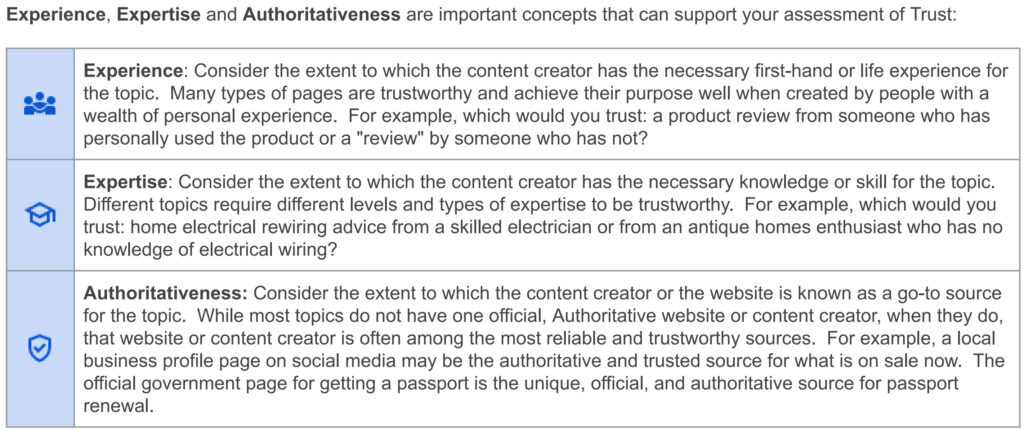

Google also provided a table that breaks down the differences between Experience, Expertise, and Authoritativeness:

Experience vs. Expertise

The difference between experience and expertise comes down to this:

Experience is judged by how much first-hand, practical experience the author has regardless of their profession or training. For example, anyone can give trustworthy advice about the safest way to slice tomatoes if they have sliced a lot of tomatoes.

Expertise is judged by how much theoretical and practical knowledge an author has due to experience and training in their profession or vocation. A medical doctor is going to have more trustworthy advice about food allergies and other life-or-death topics than someone who didn’t go to medical school.

Your Money or Your Life

Topics that could seriously affect the health or overall well-being of the reader are classified as Your Money or Your Life (YMYL) topics. This includes health and medical topics, but it also applies to websites that give financial advice.

Very importantly, websites that collect personal information, such as credit card numbers in checkout forms or contact information, are also YMYL. So, if you sell anything online or use the web to direct customers to your brick-and-mortar store, you are YMYL.

Google recommends their Quality Raters have the highest amount of scrutiny for websites in YMYL spaces. This is important for Google’s reputation because its rankings are like an endorsement. Consumer trust in Google Search would significantly drop if following directions on top-ranking pages caused serious harm to users.

Businesses should definitely read through the SQRG to see what it takes to get the highest scores because Google’s ranking algorithms are probably looking for the same things.

1. Page Quality (PQ) Rating

The first rating is for the overall page quality of the search result and doesn’t involve search intent. Quality Raters are asked to investigate the website, the author, and their reputation to help assess E-E-A-T. Google recommends doing this research by searching for who is responsible for the content and seeing how they are talked about in the news, reviews, and on social media.

The investigation process that raters go through is very similar to what your customers will search for when deciding if they should buy from you, so the reputation of your business is very important.

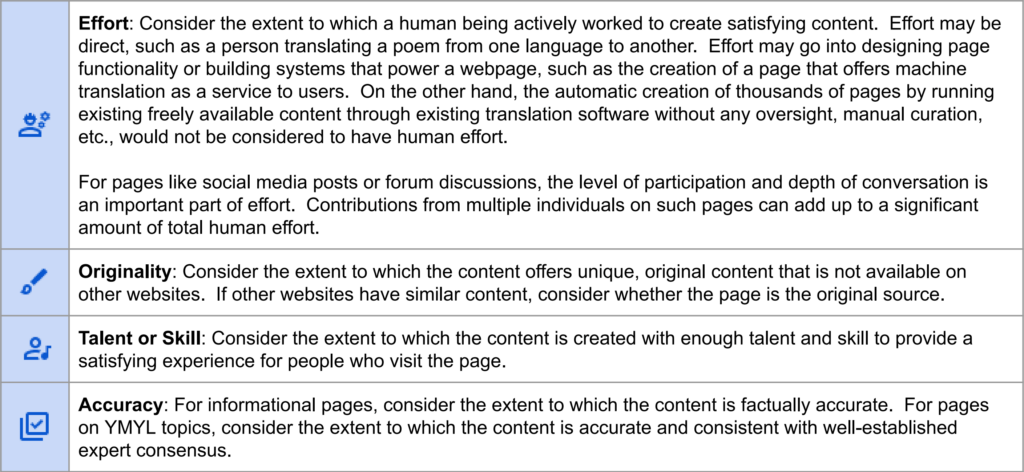

Quality Raters are also asked to determine the purpose of the page they’re reviewing and how well the page content succeeds. A recent addition to this section is this table that outlines how the raters should judge purpose and execution:

This table is very helpful for content creators to understand what “high-quality content” means. Note that there is no mention of word count or content length in the table. Google thinks effort, originality, skill, and accuracy are what primarily go into content that is valuable to a Google Search user.

Given the E-E-A-T of the page, the reputation of the creator, the page’s purpose, and its execution, raters then give a Page Quality rating to the page. Google uses a scale from Lowest to Highest with graduations in between, like Medium and Medium+.

Lowest quality pages are untrustworthy, deceptive, harmful to people or society, or have other highly undesirable characteristics.

Google really wants to filter out harmful pages from their search results. If an experimental change accidentally ranks harmful pages highly, they rely on their raters to find them and mark them as such. We should assume experimental updates that boost too many harmful pages have to be worked on before going live.

If the E-E-A-T of a page is low enough, people cannot or should not use the MC (Main Content) of the page. If a page on YMYL topics is highly inexpert, it should be considered Untrustworthy and rated Lowest.

Raters should fail pages if the E-E-A-T is too low for YMYL queries. Since businesses are automatically YMYL, assume that if a rater would give your pages a Lowest rating, Google is already trying to do that with their ranking signals.

Highest quality pages serve a beneficial purpose and achieve that purpose very well. The distinction between High and Highest is based on the quality of MC, the reputation of the website and content creator, and/or E-E-A-T.

Google very much wants to release updates that position the highest quality pages on the top of the SERPs. Here, Google tells raters what that means: content that benefits users and does it well from high E-E-A-T websites.

2. Understanding Search User Needs

Google serves search results all over the world in a lot of languages (80+), and they naturally have to make sure their systems understand search intent in all of them.

They do that by providing some context to raters about the search result sets they review. Each rating task comes with these data points:

- Query – The exact wording that generated the search result set.

- Locale – Which country the hypothetical user is searching from and which language they are using.

- User location – A more specific location of the hypothetical user. It can be a city or state.

- Device – Desktop or mobile. This isn’t given specifically, but the guidelines ask the raters to think about which results are appropriate for the user’s device implied by the search results.

Given the query’s context, the raters are asked to figure out what is most likely the users’ search intent.

Google also gives the raters a broad classification of intents to consider:

- Know – When the user is researching something or trying to get a quick answer or definition.

- Do – The user is trying to accomplish something or interact with a website. This includes buying something, so transactional intent is categorized here.

- Website – The user is trying to navigate to a specific website or page. Brand searches usually have this when the user doesn’t know the URL.

- Visit-in-Person – The user is trying to get to a specific location or business. We usually call this “local” intent.

After figuring out what the intent behind the query is, raters then judge how well the page they’re reviewing addresses the query.

3. Needs Met (NM) Rating

Finally, raters are tasked with reading the page or search feature and making a subjective determination of how well the result matches the query.

While the Needs Met rating depends on the Page Quality rating, they are different. Raters are asked to ignore the query while determining Page Quality. This reflects two goals of Google Search: differentiate between high and low-quality pages, then match queries to the most relevant pages.

Each result gets a Needs Met rating on a scale from Fails to Meet to Fully Meets, also with graduations in between.

It’s very hard for a page to get a Fully Meets rating because the intent of the query needs to have high certainty, and the result must be the “complete and perfect response or answer” to the query.

The other Needs Met ratings are straightforward:

A rating of Highly Meets is assigned to results that meet the needs of many or most users. Highly Meets results are highly satisfying and a good “fit” for the query. In addition, they often have some or all of the following characteristics: high quality, authoritative, entertaining, and/or recent (e.g., breaking news on a topic).

Highly Meets pages should have all of the characteristics of high Page Quality rated pages but also actually answer the query in a satisfying way.

A rating of Fails to Meet should be assigned to results that are helpful and satisfying for no or very few users. Fails to Meet results are unrelated to the query, factually incorrect (please check for factual accuracy of answers), and/or all or almost all users would want to see additional results.

Fails to Meet criteria are also obvious, but there are still a lot of pages out there that are basically fluff text with keywords thrown in. What’s surprising is how many brands expect content like this to perform.

The rest of the guidelines provide more details about how to perform rating tasks with lots of examples.

Why are Search Quality Rater Guidelines Important for SEO?

Anyone who touches the Organic Search channel of their brand needs to be familiar with the Search Quality Rater Guidelines. Google is essentially saying to us, “this is what we want our ranking algorithms to sort out, now and in the future.” This knowledge is invaluable for reviewing our own websites and explaining why our content performs well or takes a massive visibility dive after a core algorithm update.

While reading the SQRG, assume that Google Search is attempting to reach similar conclusions about content quality as the Quality Raters. Google isn’t necessarily performing the same research process or reading the same things.

Clues About Google’s Ranking Signals

There is no such thing as an Experience score or E-E-A-T score. Instead, Google has multiple overlapping scores that can be categorized as E, E, A, or T. The E-E-A-T label is for explaining how Google defines quality in a way that most people can understand.

What the Search Quality Rater Guidelines do is give us a clue about which aspects of a web presence Google analyzes. For example, there could be systems that read user reviews about a business on Google Maps, Yelp, etc., and then see what they indicate based on star rating, specific complaints or acclamations, and customer service – all generating individual scores used in ranking.

More Than Content and Backlinks

Years ago, a winning SEO strategy was to crank out a lot of low-quality content and pay someone to create thousands of spammy backlinks. I should know; I got my start in agencies that used this strategy and did some of this myself.

After reading the SQRG all those years ago, I realized the jig was up. Google was going to reach a point where SEOs could no longer fake it, and there were no more shortcuts to high rankings. The recent Helpful Content Update is evidence of this trend, and we should expect to see more like it.

Right now, it’s not enough to have middling content and an okay backlink profile. To be competitive requires having superlative content and business practices and having backlinks, brand mentions, and reviews that prove this.

For businesses, this means we can’t just ask for the user’s credit card number on our landing pages. Our content has to have a beneficial purpose by helping them confidently make that buying decision.

The Big Picture

Ultimately, Google wants to show search results that make their product better, so users keep coming back. Google is an advertising company first, and if only around 1.59% of searches result in an ad click, they absolutely have to maintain its monopoly over web search.

By providing better search results for Google to display, SEOs are an important part of Google maintaining its ad revenues. This means the publicly available Search Quality Rater Guidelines are part of a tacit agreement between SEOs and Google: if we make their product better, they will send us some qualified traffic instead of relying entirely on Google Ads.

The post Google’s Search Quality Evaluator Guidelines for SEO in 2023 appeared first on Portent.

![Read more about the article How Nonprofits Can Use TikTok for Growth [Case Study + Examples]](https://www.dimaservices.agency/wp-content/uploads/2022/03/6193b715-2ba9-4c6d-add0-681edfcae689-300x54.png)